← Latest news

RAG fine tuning may cut retrieval accuracy by 40% and break agent decisions at scale

Technology

Published on 28 April 2026

Precision training can still worsen meaning detection

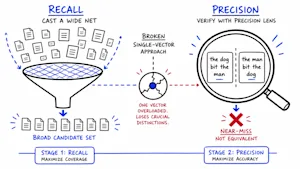

Redis research warns that fine-tuning RAG embedding models for “compositional sensitivity” can quietly harm general retrieval, dropping accuracy up to 40% on production mid-size models. The issue: structural meaning shifts like negation and role reversals can end up near-identical in embedding space, while common fine-tuning metrics miss it. Agentic pipelines are especially vulnerable.

- Fine-tuning for precision can degrade broad retrieval generalization

- Negation and role reversal errors are harder to fix reliably

- Benchmark metrics may improve while production precision worsens

- A two-stage recall then token-level verification approach works best

Read the full story at Venture Beat

This summarization was done by Beige for a story published on ![]() Venture Beat

Venture Beat